Self-Evolving AI Agents: How Models Learn to Improve Without Human Data

Trends in ICLR 2026 RSI workshop - Self-Evolving Agents

W12 Basic Tutorial · Beginner · March 2026

Research Area: Recursive Self-Improvement (RSI)

Companion Notebook:

01_agent0_coevolution_toy.ipynb— a hands-on toy demo of the co-evolutionary loop described below. CPU only, runs in under a minute.

What Are Self-Evolving Agents?

Imagine learning to play chess without anyone teaching you the rules — you just play games against yourself, getting better each time. That's essentially what self-evolving AI agents do: they improve their own capabilities without needing new data from humans.

Traditional AI training works like a school: humans prepare training materials (data), and the model learns from them. Self-evolving agents flip this model — the AI becomes both the student and the teacher, generating its own curriculum and learning from it.

Why Does This Matter?

There's a growing problem in AI: we're running out of high-quality training data. Human-created data is expensive, slow to produce, and finite. If AI systems can learn to improve themselves, they could continue getting better without waiting for humans to create more training examples.

This matters for practical applications too. Consider a coding assistant: instead of needing millions of human-written code examples, a self-evolving system could generate its own programming challenges, attempt solutions, verify them by running the code, and learn from both successes and failures.

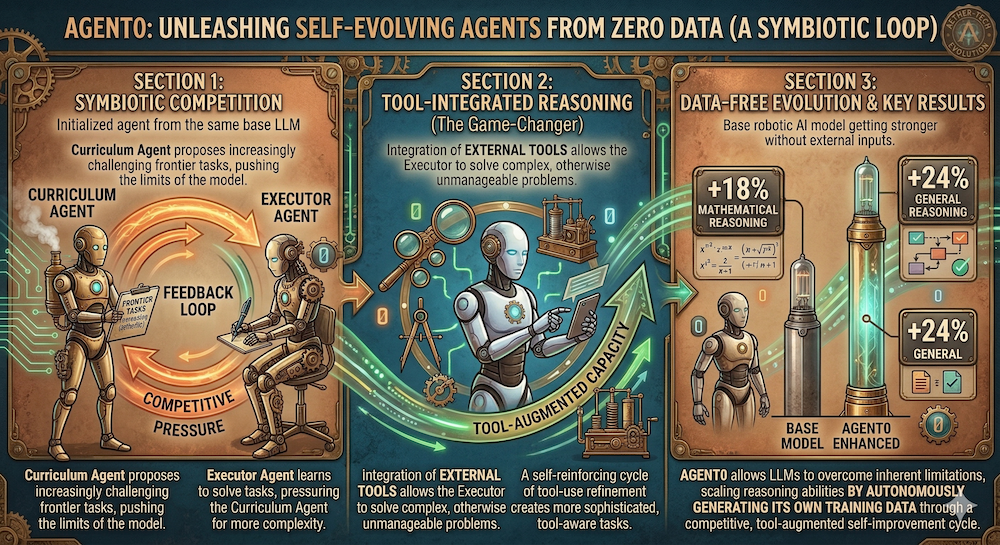

The Two-Agent Architecture: Agent0

A recent paper called Agent0 (OpenReview), presented as an Oral paper at the ICLR 2026 RSI Workshop, shows how to build a self-evolving agent starting from zero external data.

The design uses two agents, both initialized from the same base language model:

The Curriculum Agent acts like a teacher. Its job is to propose tasks — problems for the other agent to solve. Crucially, it doesn't propose random tasks. It learns to propose tasks that are just hard enough to challenge the solver without being impossible.

The Executor Agent acts like a student. It attempts to solve the proposed tasks, using external tools (calculators, code execution, web search) along the way. As it gets better, it pressures the curriculum agent to create harder, more tool-aware problems.

This creates a co-evolutionary loop: as the student improves, the teacher adapts, which pushes the student further, which pushes the teacher further, and so on.

What Makes This Work?

The key is the concept of learnable information gain — tasks need to sit in a "Goldilocks zone" of difficulty:

- Too easy: The agent already knows how to solve it, so there's no learning signal

- Too hard: The agent has no chance of solving it, so there's no useful feedback

- Just right: The agent can't solve it yet, but has enough capability to learn from the attempt

Agent0 achieves this adaptive difficulty naturally through co-evolution. Starting from Qwen3-8B-Base with zero external data, it improves mathematical reasoning by 18% and general reasoning by 24%.

The Self-Play Approach: Learning Through Competition

Another approach comes from Language Self-Play (LSP) (OpenReview), which uses game-theoretic competition. Instead of a teacher-student pair, a single model's capabilities are cast as performance in competitive games. The model plays against itself — and stronger strategies emerge from this self-competition, much like how chess engines improve through self-play.

LSP demonstrates that even a relatively small model (Llama-3.2-3B-Instruct) can improve on instruction-following, math, and coding benchmarks using self-play alone.

Why Can't We Just Do This Naively?

If self-improvement sounds straightforward, there's a catch: it often fails. Research has identified several common failure modes:

Mode collapse: The agent learns to generate easy tasks it can always solve, then stops improving because there's no challenge left.

Degenerate curricula: The teacher proposes tasks that are technically hard but not useful — like generating nonsensical math problems that don't build real reasoning skills.

Contextual drag: A recent paper (OpenReview) shows that when models try to learn from their past mistakes, those mistakes can actually contaminate future reasoning. Our own reproduction (NB 02) confirms this: just one failed attempt in context drops accuracy by 15–18 pp, and structural analysis shows the model doesn't just fail more — it copies the same error patterns from context (ROUGE-L similarity of 0.71–0.85 between new errors and context errors, vs. 0.40 for clean responses). Even telling the model "the above was wrong" recovers only 2–3 pp. The co-evolutionary approach from Agent0 is inherently resistant to this because it generates new tasks instead of retrying failed ones.

These failure modes are why naive self-improvement loops often stall or degrade. The research community is actively building diagnostics and guardrails to prevent them.

What's Next?

Self-evolving agents are moving fast. The ICLR 2026 RSI Workshop (April 26–27, Rio de Janeiro) features 110 papers on this topic — a sign that the field is transitioning from isolated experiments to systematic engineering.

Key open questions include:

- Scaling: Do these approaches work for very large models (100B+ parameters), or do new failure modes emerge?

- Multimodal self-evolution: Can vision-language models improve themselves the same way text-only models can?

- Safety: If agents improve without human oversight, how do we ensure they improve in directions aligned with human values?

Key Takeaways

- Self-evolving agents can improve without external data by generating their own training curricula

- The co-evolutionary approach (Agent0) uses two agents — a curriculum generator and a task executor — that push each other to improve

- Self-play (LSP) offers an alternative: models improve by competing against themselves

- Naive self-improvement often fails through mode collapse, degenerate curricula, or contextual drag

- This is now a systems engineering challenge, not just a research curiosity

References

- Agent0: Unleashing Self-Evolving Agents from Zero Data via Tool-Integrated Reasoning — Xia et al. (ICLR 2026 Workshop RSI, Oral)

- Language Self-Play For Data-Free Training — Kuba et al. (ICLR 2026 Workshop RSI, Spotlight)

- Contextual Drag: How Errors in the Context Affect LLM Reasoning — Cheng et al. (ICLR 2026 Workshop RSI, Oral)

- ICLR 2026 Workshop on AI with Recursive Self-Improvement

Stay connected:

- 📧 Subscribe to our newsletter for updates

- 📺 Watch our YouTube channel for AI news and tutorials

- 🐦 Follow us on Twitter for quick updates

- 🎥 Check us on Rumble for video content